I went to Microsoft Ignite The Tour last week. The true value was in catching up with friends and colleagues I have not seen for a while and seeing presentations on the technologies I have heard about but not yet got around to playing with.

One such presentation was by the awesome Amy Kapernick who presented her Quokkabot. Using .Net she linked up WhatsApp and Azure’s Custom Vision API so that anyone could ask for a picture of a quokka or check whether the picture they had was a quokka.

I approached her at the end of the presentation to ask if she had considered doing the same on the Power Platform and she said she had not. Challenge Accepted!!

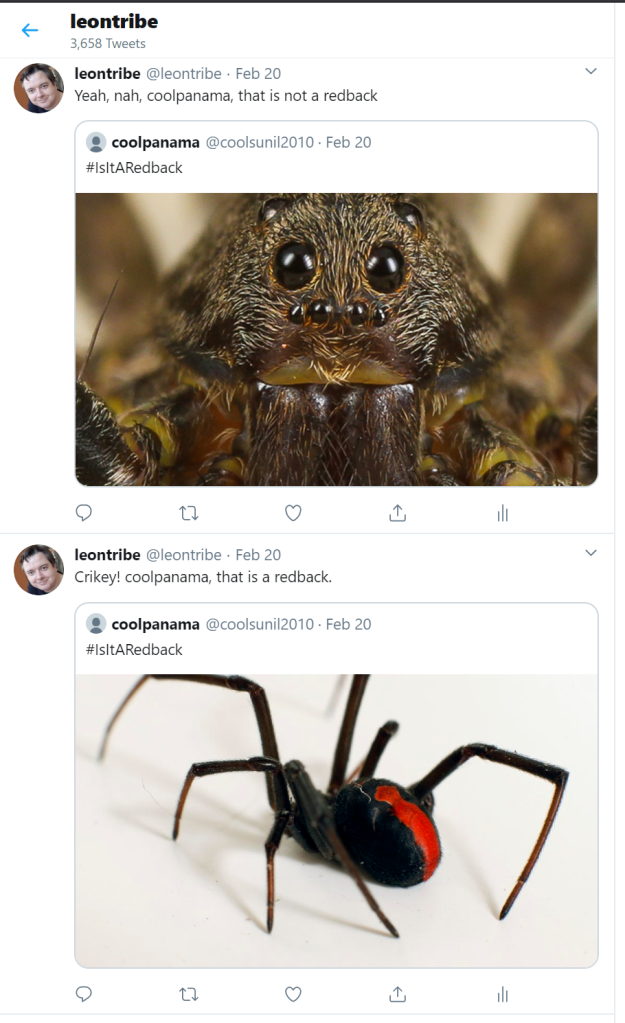

This is the end result; you can Tweet any image with the hashtag #IsItARedback and my bot will Tweet on @leontribe whether it is or not (being a free plan the response can take up to an hour but it will come). There was literally NO code in its production. It looks something like this:

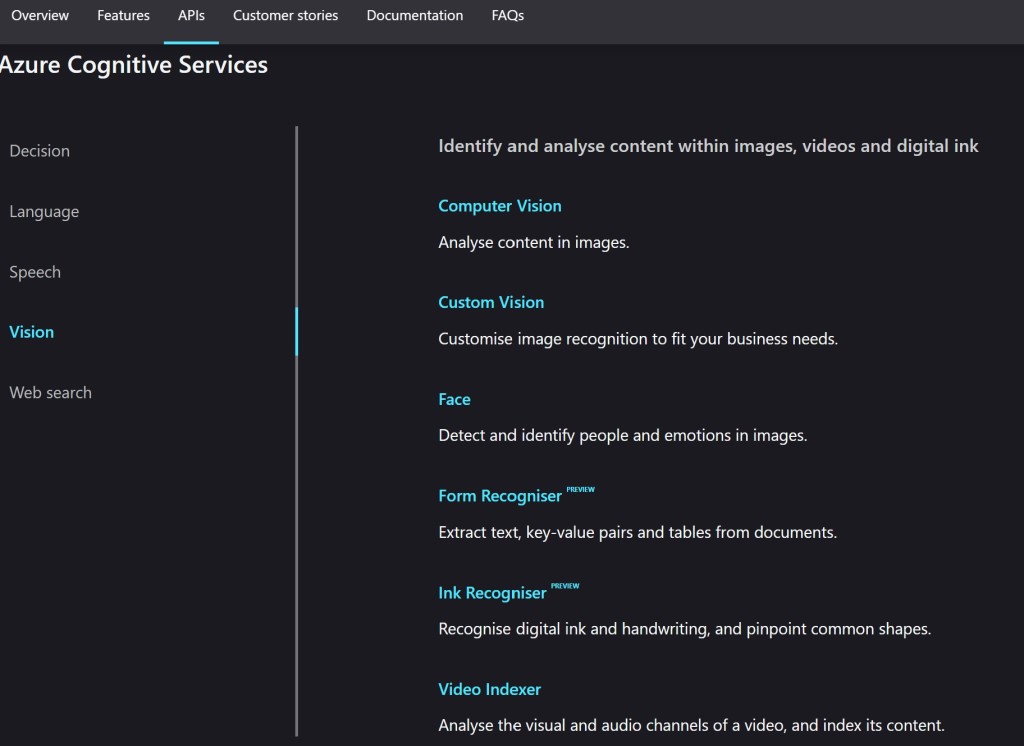

So What is the Custom Vision API?

Custom Vision API is part of Azure’s Cognitive Services. Cognitive Services are Azure’s AI services which can do mind-blowing things. They are friendly and well worth your time.

As you will see in this blog, they are quite easy to set up when you have Power Automate in your corner.

Custom Vision is a deep learning image analyzer which is a fancy way of saying you can train it to recognise stuff in images. There are plenty of other services, depending on your need but this is the one I needed for my application.

The Project

Amy comes from Perth where there are quokkas. Sydney does not have anything as cute as quokkas so I selected the redback spider. Truth be told Perth has redbacks too but it is much easier for me to identify a redback than, say, a funnelweb when compared to the other eight-legged critters that inhabit Australian shores. Please note the recognition limitation is mine and not that of the Custom Vision API.

While Amy used WhatsApp to make the request, there is no standard Connector in Power Automate for WhatsApp so I used Twitter instead. I was already reasonably familiar with Power Automate and Twitter from working on my TwitterBot which helped the decision.

The Power Automate Bit

If you do not know what Power Automate is, it is the new name for Microsoft Flow. The rumour is Microsoft could not secure the name Power Flow so they went with Power Automate just so everything in the Power Platform had ‘Power’ in the title.

The legacy still remains though. To set up a free account and create a flow (which seems to be what they are still going with) you go to flow.microsoft.com. In this case, our flow is relatively simple.

To go through is step by step in graphics with larger text, our trigger is a Tweet with the hashtag #IsItARedback.

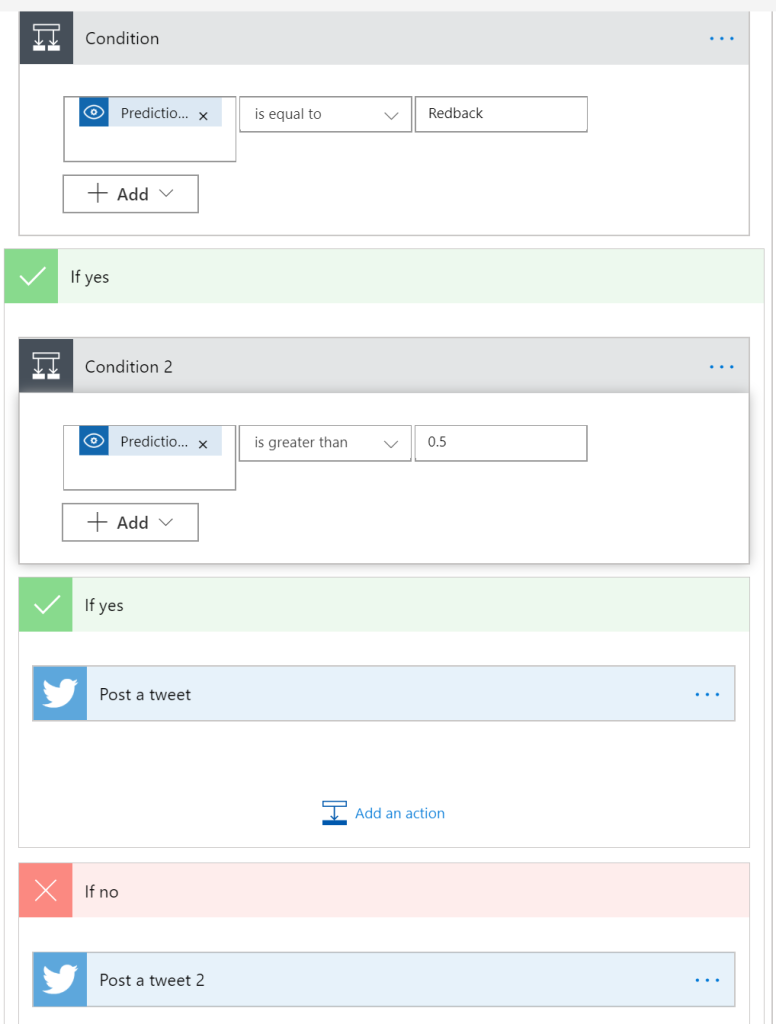

We then loop through the media images linked in the Tweet and feed them to the Custom Vision API.

The Custom Vision API returns probabilities that the image corresponds to one of the Tags we have set up (we will see this a little later).

Looping through the Tags, we check whether the Redback tag has scored a probability of greater than 50% and respond via Twitter accordingly.

The Tweets are constructed such that they respond and show the Tweet they are responding to.

The Custom Vision API Bit

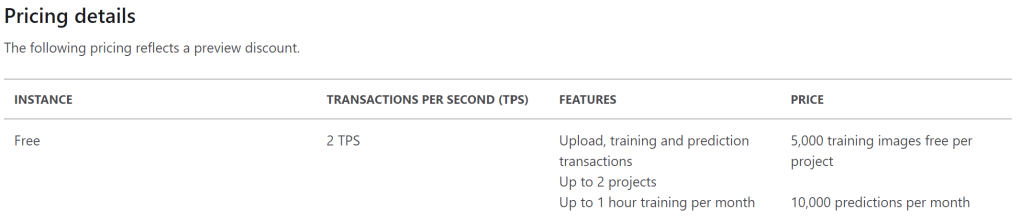

Firstly head to portal.azure.com You will need an Azure subscription but you can get a 12 month trial for free with $200 of credit.

Click the big plus and search for “Custom Vision” to find the service. Hit the Create button and fill in the fields, keeping the default options.

There is a free pricing plan to help preserve those trial credits.

Once complete, two services will be created: the training service and the prediction service.

Click through to the one which is not the Prediction service, go to Quick Start and click the link through to the Custom Vision Portal and sign in.

Create a new Project and follow the prompts.

The Getting Started wizard will then walk you through the setup.

By the end we have created two Tags: ‘Redback’ and ‘NotRedback’, uploaded at least 15 images for each, linked the images to the Tags and trained the model by hitting the Train button (I did the Quick Train to preserve my free cycle allocation). Do not forget to hit the Publish button on the Performance tab to make your training model/iteration accessible.

The Quick Test button allows you to test from the portal uploading an image file or providing a URL.

Linking Power Automate and Custom Vision API

The final link in the chain is linking Power Automate to the Custom Vision API. This was the step which took me the longest to figure out. To set up the Connection, you will need:

- Connection Name: Whatever you like

- Prediction Key: This can be found by clicking the ‘Prediction URL’ button on the Performance tab.

- Site URL: This is the data center for your model. This took a bit of detective work by going to the Custom Vision Prediction API Reference ClassifyImageURL page (thank you Olena Grischenko for the tip!) and seeing how it constructed the Request URL I could work out the components. For me, as I am based my service out of the East US center, the value is eastus.api.cognitive.microsoft.com

You will then need to populate the values in the flow Step:

- Project ID: You can either get this from the page URL when in the project in the Custom Vision API portal, or by clicking the Cog icon in the Custom Vision portal at the top right.

- Published Name: The one value that took me the longest to figure out (Seriously Microsoft, make it easy for the dev-muggles!) What it should be called is Iteration Name. The default value is ‘Iteration1’. In this case trawling through sample code online showed that ‘Iteration Name’ = ‘Published Name’ = ‘Model Name’

Conclusions

While this is a fun and trivial example (unless you have just been bitten by a spider and are not sure), it is clear to see there is a lot of possibility in this technology. The Cognitive Services are already being used to help manage fauna and flora in Australia’s Kakadu National Park, as well as to manage fish populations in Darwin Harbour. I can also see this service bringing semi-automated medical diagnostic services to the world, being used for the auto-assessment of parts before they malfunction and quality control of parts in mass production. Considering where we have come from, it is very exciting times.

Have you looked at AI Builder (https://powerapps.microsoft.com/en-us/ai-builder/) it allows you to do what you did above without needed to interact directly with the Vision APIs.

LikeLike

I played with AI Builder when it first came out and had mixed results but I probably should revisit it 🙂

LikeLike